Imagine what a mission could accomplish if it were possible to put the combined expertise of the science and operations teams onboard a spacecraft. It could detect events and then use that information to determine its next actions in real time without input from humans. Event-driven autonomous operations will be key to a new class of missions that could accomplish amazing things—from traversing subterranean caves on Mars, to unlocking the secrets of turbulence in the solar wind, to exploring under the ice of Europa.

Mission operations engineers face the daunting tasks of maintaining the health and functionality of a spacecraft and its payload, capturing high-value data, and responding to a dynamically evolving environment. They must plan activities days or weeks into the future using very limited information. These tasks become even more complicated when one factors in the lengthy time between spacecraft contacts, the light-time delay of bidirectional communications, the transient nature of ephemeral science targets, and the prospect of multiple spacecraft operating simultaneously.

In an effort sponsored by the Space Technology Mission Directorate’s Small Business Innovation Research/Small Business Tech Transfer (SBIR/STTR) program, Aurora Engineering has developed an onboard autonomous operations agent that can help mitigate these challenges. Rather than a ground team planning spacecraft activities according to a schedule that is determined weeks in advance and is often based on predictive models with significant limitations, the Module for Event Driven Operations on Spacecraft (MEDOS) drives onboard operations by detecting events as they occur and determining a rational response the spacecraft can make in real time.

The MEDOS autonomous operations agent uses raw telemetry from multiple sources and fuses them together in real time to derive physically significant parameters. These parameters are then compared to known events and based on these comparisons, MEDOS concludes, with a transparent and easily understood confidence measure, that a given event is occurring so it can decide on the appropriate autonomous response. Unlike many machine learning applications, MEDOS does not require volumes of labeled training data, and it does not require precise numeric values to compare against. Rather, MEDOS incorporates uncertainty into its classification of events by encoding years of subject matter expert experience into an easy-to-understand mathematical construct.

By combining multiple derived parameters—each weighted appropriately—MEDOS arrives at an overall assessment. For example, as shown in Figure 2, MEDOS takes the raw data from onboard instruments and derives physical parameters to indicate changes in the signal density, the environment, etc. A sudden, coordinated change across multiple parameters (e.g., the energy and density of particle populations and the magnetic field activity simultaneously increase) could indicate the onset of a significant space weather event. Once MEDOS recognizes the signature in the data and flags the corresponding measurements as a “Space Weather Event,” it then prioritizes the data for downlink.

Since several different events might credibly explain the observations, MEDOS assigns each possible event a computed likelihood (i.e., the percentage of confidence of detected events in Figure 2.) If, for example, the signal is always coming from the same direction in space regardless of spacecraft orientation, the signal is likely a real event measured from the local environment. If, however, the signal is always coming from the same corner of the spacecraft and rotates with the spacecraft, the signal is more likely due to a hardware issue, reflection, etc. Given this information, the spacecraft can then execute a rational responsive action.

By encapsulating the subject matter expertise of multiple ground-based teams, MEDOS can algorithmically encode their rules of ‘what to look for’ into knowledge that the spacecraft can act upon without human intervention. And because MEDOS fuses multiple data products, each with its own random and systematic noise, its autonomous decision-making is robust against imprecision in those values. For example, if there are multiple scientific definitions of an event, or if the sensor data is not well calibrated, the overall picture and associated detection would remain the same, but MEDOS would assign a lower confidence.

NASA’s Magnetospheric Multiscale (MMS) mission has flight verified and successfully demonstrated MEDOS. The MMS mission consists of four identical spacecraft designed to investigate how the Sun’s and Earth’s magnetic fields connect and disconnect, explosively transferring energy from one to the other in a process known as magnetic reconnection. Magnetic reconnection is a fundamental process that powers a wide variety of space plasma events, from giant explosions on the Sun to the geospace weather that affects modern technological systems such as telecommunications networks, GPS navigation, and electrical power grids.

On MMS, MEDOS’ onboard activities involved taking raw science instrument data (e.g., counts, voltages, etc.) and transforming them into scientifically meaningful parameters such as plasma temperature, object velocity, mass fraction of a gas, relative separation, etc. Using these parameters, MEDOS determined the probability that an MMS instrument was experiencing penetrating radiation, which would require a stand down of instrument’s high voltage system.

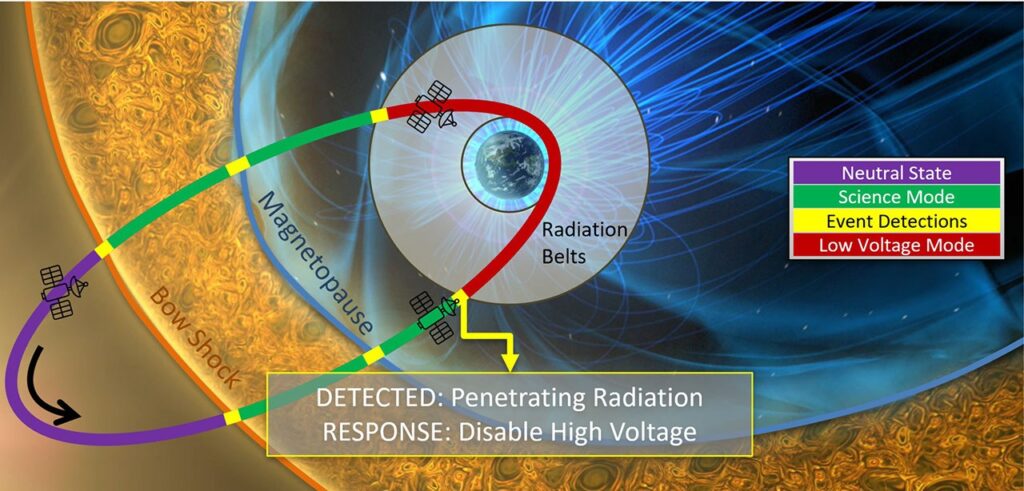

For example, MEDOS detected a radiation event in real time—an MMS passage through the Van Allen radiation belts—that was not predicted by ground systems, and determined corresponding action for the spacecraft. Figure 3 shows a schematic of one MMS orbit (in green) superimposed with event durations predicted by ground-based planning (blue arcs) and with those from actual MEDOS flight data (red arcs). The ground prediction (blue), and associated time tagged operational commands (determined weeks in advance), could not anticipate the inflation of the radiation belt that occurred in response to recent changes in the space weather environment. As a result, the predetermined command load failed to step down the high voltage system during one of the MMS passages through the outer radiation belt, enhancing the risk of instrument damage. However, MEDOS detected both passages through this shifted radiation belt (red arcs) and raised a flag to disable high voltage during these periods. A report on this topic was presented at the18th International Conference on Space Operations in 2025.

This demonstration shows the value of real-time, in-situ detection of dynamic transient events rather than reliance on predicted or human-in-the-loop responses. Additionally, MEDOS is well suited to recommend activities that are routine, but require specific conditions, such as calibration sequences, boundary crossings, and region classification.

As space missions become increasingly complex, autonomous operation will become even more vital. MEDOS encodes human knowledge into spacecraft operations in a way that is explainable, transparent, computationally lightweight, and trustworthy. This type of technology could potentially help enable the next generation of NASA missions exploring destinations like the Moon, Mars, and the outer planets.

For additional details, see the entry for this project on NASA TechPort and the associated report on the NASA Technical Reports Server.

Project Lead(s): Dr. Alexander Barrie, Aurora Engineering

Sponsoring Organization(s): NASA STMD supported development of MEDOS. Support for the MMS flight demonstration was provided by the NASA Heliophysics Division and Southwest Research Institute.