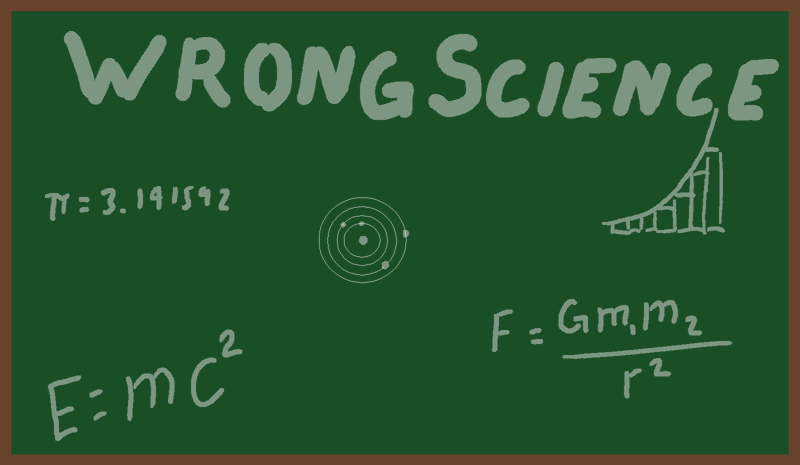

Most of us trust science and math as absolute truths, but what if many of our assumptions are flawed? From changing measurements of the speed of light to unexplained levitation and spiritual experiences, there are mysteries that seem to slip through the cracks of modern science. Could our understanding of reality be far less solid than we think?

by davideownzall

3 Comments

Despite what the Guardians of the Status Quo would have you believe, we don’t know “much of anything”, as the saying goes.

We just discovered the fundamentals of science a century or two ago.

Now we’re at the very beginning of a new technological millennium. What will our knowledge be like say, four hundred years from now? If today’s pace continues – aided by AI?

Oh and lest we forget. A former president just disclosed, officially, that aliens exist! No need for Carl Sagan’s “extraordinary evidence”, I trust Barack Obama over anyone. His statement is the essence of extraordinary!

So yeah. Maybe our scientific assumptions aren’t “completely backwards”. But they are completely amateurish, in light of what’s coming.

Because, as Arthur C. Clarke said, the universe isn’t stranger than we imagine, it’s stranger than we can imagine

Not really. The way that physics is built up is by using math to make educated guesses and comparing those to evidence, rejecting any math that doesn’t line up with reality.

If it was completely wrong then modern tech wouldn’t work at all. If new data shows up that disagrees with current theory, then they just make a new one that fits the old data and the new data. This has happened 4 or 5 times in recent history (e.g. Galileo & Kepler -> Newton -> Einstein).

Physics makes no claims to be absolutely correct, just the least wrong we’ve been able to come up with.

Personally, I’m of the opinion that anomalous phenomena are just examples of data that physics might need to accomodate, if we could only gather enough of the data numerically.

Im not sure what you’re referring to with changing measurements of the speed of light.

The speed of light in a vacuum was derived via the maxwells equations and the measurements has only become more refined since I.e. the error bars have shrunk.

With regards to light changing in speed in different mediums, this is due the mediums refractive index not because the speed of light fundamentally changes. Without getting into the nuances of Quantum field theory (QFT, not to be mistaken with Quatum fourier transforms which are just as useful but tricky) the light effectively bumps into atoms in the medium, slowing its travel through the medium but not reducing its actual speed. Between atoms, it still travels at c (obviously there’s more complexity to this in the real world). I know you didn’t mention this but someone is bound to bring it up.

What you’ve said in the article is totally incorrect. The speed of light never changed. They set the speed of light by using fundamental constants as, before this, they for example, determined what a kilogram was by getting a bunch of metal and breaking up, weighing them all to be the same amount and then distributed them around the world so everyone had an official reference.

Over time, they found due to decay that each persons lump had changed by different amounts. They needed something much less arbitrary, hence the speed of light was used, and other things such as planks constant. Your description of this process is purposefully misleading.

I believe the derivation of finding 1=-1 famously requires you to perform an illegal operation and divide by 0 at some point. Additionally, isn’t this high school level maths? Do you really think if this was a global conspiracy, 100 years of science couldn’t come up with a more robust lie than that, which can be defeated by a 16 year old in highschool?

I think with regards to asymptotes and calculus you may want to revisit your text books. You might have missed something there and some of the nuance behind the maths.

This cant be real. Super conductivity swept under the rug? A ridiculous amount of money has been poured into this iver the past couple decades. It’s not been hidden from anyone. Academic papers contain methods justifying their statistical and experimental techniques. You could have read them over the past 30-40 years easily and seen their steady progression. Science is hard and takes time to improve.

We even have superconductors in a space telescope now (Japan’s X ray telescope XRISM – look up how it works it’s quite cool).

Science will continue to change, but not in the way you describe. It will become more refined over time with better observational instrumentation, instrumental and theoretical techniques, and computing power. For example, we know GR works as a theory of gravity. We know this because it we can see it with many different phenomena that are perfectly described. Is it the end all be all? No, but any advancements are going to include all of GR and then be an extension.

I’m also not sure what scientific assumptions you’re referring to either and why they would be backwards. You didnt meantion any. Most assumptions we make are fairly educated guesses and quite well informed. For example, the assumption that supernovae feedback can be described stochastically and as the dominant stellar feedback mechanism for cosmological simulations is a reasonable assumption based on a large amount of theoretical and observational evidence.

I’m more than happy to answer any questions anyone has.